Methodology

The IRIS platform is an advanced benchmarking tool designed to assess and compare institutional performance in research integrity.

The IRIS platform is an advanced benchmarking tool designed to assess and compare institutional performance in research integrity.

The IRIS platform is designed to help universities look at their performance through the lens of research integrity. Instead of relying only on traditional metrics, it uses the Indicators of Research Integrity Risk framework to highlight patterns that may suggest areas of vulnerability. Institutions can see how they compare nationally and globally, and use that perspective to support thoughtful, informed decisions.

A key principle behind IRIS is the difference between identifying risk and accusing misconduct. The platform is not meant to police or punish. It works more like an early warning system. It points to patterns such as unusual authorship trends or publication behaviors that might indicate greater exposure to integrity challenges. At the same time, these patterns are not proof of wrongdoing. In many cases, they may reflect disciplinary norms or specific institutional characteristics.

For that reason, the data is only the starting point. Numbers alone cannot explain what is really happening. Proper interpretation requires context, including an understanding of governance structures, incentive systems, and institutional culture. Meaningful assessment comes from combining quantitative signals with expert judgment and qualitative insight.

The platform provides high-level benchmarking that can be useful to university leadership, research offices, funders, policy analysts, and publishers. It allows them to compare institutional profiles and monitor potential systemic vulnerabilities over time. While IRIS focuses on these broader views, SCImago can also produce detailed, document-level analyses when needed. These more granular reports are intended to support Research Integrity Officers and compliance teams who need deeper evidence to move from a general risk signal to a clear diagnosis and practical steps for improvement.

IRIS indicators are calculated using data from the latest edition of the SCImago Institutions Rankings (SIR) and are available for all higher education institutions included in the ranking. Through the platform’s interactive interface, users can explore results for each institution and each specific indicator. This makes it possible to look more closely at areas that may deserve attention and to understand how an institution compares both nationally and globally.

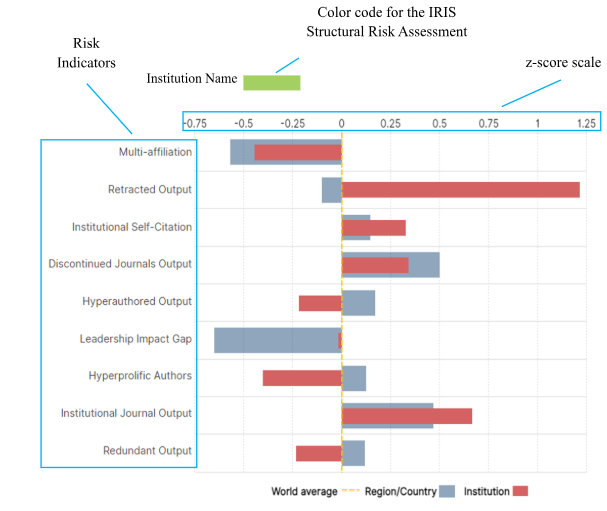

Because the indicators measure different things and operate on different scales, IRIS uses Z- score normalization to make comparisons meaningful. In simple terms, this converts the data into a common scale where zero represents the global average. Positive values indicate higher-than-average exposure to potential integrity risks, while negative values suggest lower exposure.

Unlike percentile rankings, which can sometimes smooth over important differences, Z- scores preserve the size of deviations. This matters in a system designed to detect risk. The goal is not to rank institutions competitively, but to highlight unusually strong patterns that may signal structural vulnerabilities. By focusing on the intensity of outliers rather than position in a league table, IRIS functions as an early warning tool, helping distinguish normal variation from patterns that may require closer examination.

To facilitate risk assessment, the platform provides two complementary levels of analysis in a global context. The first is the global assessment, which aggregates the full set of indicators to produce an overall measure of institutional risk, known as IRIS Structural Risk Assessment. The second is an indicator-level assessment, which offers insights into specific risk areas, helping institutions understand the components driving the overall structural risk and identify dimensions that may benefit from focused attention.

Most of the institutions are categorized as having “very low structural risk” when the aggregated indicators are well below the world average, in the lowest risk quartile, or “low structural risk” when the structural risk is below the global average. Some of them are assessed as having “medium structural risk” when risk is above the global average but not considered unusual. Finally, “significant structural risk” identifies cases where values can be considered outliers.

This indicator measures the proportion of an institution’s output where at least one author lists more than one institutional affiliation. While multiple affiliations are often a legitimate result of researcher mobility, dual appointments, or partnerships between universities and teaching hospitals, disproportionately high rates can signal strategic attempts to inflate institutional credit or “affiliation shopping”. This dynamic dilutes the recognition due to researchers' home institutions while artificially boosting the indicators of those incentivizing the extra affiliation. Calculated as the percentage of multi-affiliated output, this indicator helps identify previously undetected multi-affiliation practices, highlighting areas where credit attribution may warrant review rather than implying immediate misconduct (Halevi et al., 2023).

Rate of Retracted OutputThe Rate of Retracted Output tracks the share of an institution’s output that has been formally withdrawn from the scientific record. Retractions serve as an objective signal that a correction was necessary, but these corrections range from honest errors to serious misconduct such as plagiarism or fabrication (Fanelly et al. 2015). Crucially, a higher rate may sometimes reflect an institution’s rigorous commitment to post-publication quality control and transparent oversight. Therefore, this indicator acts as a prompt for qualitative verification to determine if the pattern stems from systemic integrity failures or a robust culture of scientific correction.

Rate of Institutional Self-CitationThis metric reflects the proportion of citations received by an institution that originate from its own authors, providing insight into the balance between internal recognition and external validation. While a baseline level of self-citation is a natural byproduct of cumulative research programs, excessive levels can indicate scientific insularity or an attempt to inflate impact. It is important to note that specialized or niche institutions may naturally exhibit higher rates due to limited global peer networks; however, the potential risk of limited external validation remains a relevant area for strategic reflection.

Rate of Output in Discontinued JournalsThis indicator represents the share of research output published in journals that have been removed from major bibliographic databases (such as Scopus) due to quality or ethical concerns (Cortegiani et al. 2020). High values in this indicator suggest potential vulnerabilities in "due diligence," exposing the institution to predatory or questionable publishing practices. While isolated cases may reflect context-specific decisions, a consistently high proportion signals a systemic need for better support structures to help researchers identify and avoid low-quality dissemination venues, in order to achieve long-term scientific reliability.

Rate of Hyper-Authored OutputThe Rate of Hyper-Authored Output identifies the proportion of works featuring an exceptionally large number of co-authors, defined as statistical outliers relative to their specific discipline and publication year. While extensive collaboration is standard in fields like high-energy physics or large-scale clinical trials and can explain high rates in specialized institutions, anomalous patterns in other areas can obscure individual contributions and signal practices such as honorary or guest authorship. A detailed review contextualizing author counts by discipline can help to distinguish between necessary and unjustified hyper-authorship rates.

Gap between Impact of total output and the impact of output with corresponding author from the institutionThe Gap in Normalized Impact compares an institution’s overall Field-Weighted Citation Impact (FWCI) against the impact of output where it holds a leading authorship position (Moya-Anegón et al. 2013). A significant positive gap suggests that high-impact results rely heavily on external partners, pointing to a "sustainability risk" where scientific visibility is contingent on collaboration rather than autonomous leadership. While often a necessary stage of capacity building for emerging institutions, high values serve as a constructive signal to reflect on the balance between collaborative participation and the development of independent, endogenous research capacity.

Rate of Hyperprolific AuthorsRate of Hyperprolific Authors monitors the proportion of institutional contributions linked to researchers exceeding a threshold of 25 works per year. While high productivity characterizes successful team leaders in experimental sciences, extreme outliers can signal a disconnect between output volume and meaningful intellectual contribution (Ioannidis et al. 2018). Viewed at the aggregate institutional level, this metric filters out most legitimate disciplinary norms to highlight systemic imbalances, encouraging a review of whether production incentives are compromising the depth and transparency of individual research contributions.

Rate of Output in Institutional JournalsThe Rate of Output in Institutional Journals measures the proportion of scholarly production appearing in venues owned or managed by the institution itself. While these journals support local dissemination and early-career researchers, excessive reliance on them raises concerns regarding editorial independence, peer-review objectivity, and potential endogamy. High rates are not inherently problematic if the journals meet competitive standards; rather, the indicator prompts institutions to ensure that internal publishing strategies do not compromise external validation or create conflicts of interest in research evaluation.

Rate of Redundant OutputThis indicator tries to detect cases when a single coherent research study has been fragmented into multiple works, often referred to as "Salami Slicing". Contributions are labeled as redundant when they have unusually high bibliographic overlap (over 70%) with other works published by the same authors in the same year. A high overlap of cited references can simply be the result of publishing from a single project or of a very small field of research. However, although research continuity naturally requires citing foundational work, excessive fragmentation of a single study into multiple "minimal publishable units" distorts scientific evidence and strains the peer-review system. This indicator alerts institutions to excessively high rates, enabling them to conduct detailed internal reviews of their scientific production.

BibliographyHalevi, G., Rogers, G., Guerrero-Bote, V. P., & De-Moya-Anegón, F. (2023). "Multi-affiliation: a growing problem of scientific integrity". Profesional de la Información, 32(4).https://doi.org/10.3145/epi.2023.jul.01

Fanelli, D., Costas, R., & Larivière, V. (2015). Misconduct policies, academic culture and career stage, not gender or pressures to publish, affect scientific integrity. PloS one, 10(6), e0127556. Cortegiani, A., Ippolito, M., Ingoglia, G., Manca, A., Cugusi, L., Severin, A., ... & Giarratano, A. (2020). Citations and metrics of journals discontinued from Scopus for publication concerns: the GhoS (t) copus Project. F1000Research, 9, 415.Moya-Anegón, F., Guerrero-Bote, V. P., Bornmann, L., & Moed, H. F. (2013). "The research guarantors of scientific papers and the output counting: a promising new approach". Scientometrics, 97(2), 421–434.http://dx.doi.org/10.1007/s11192-013-1046-0

De-Moya-Anegón, F., Guerrero-Bote, V. P., López-Illescas, C., & Moed, H. F. (2018). "Statistical relationships between corresponding authorship, international co-authorship and citation impact of national research systems". Journal of Informetrics, 12(4), 1251–1262.

Ioannidis, J. P., Klavans, R., & Boyack, K. W. (2018). Thousands of scientists publish a paper every five days. Nature, 561(7722), 167-169.

Bar-Ilan, J., & Halevi, G. (2018). "Temporal characteristics of retracted articles". Scientometrics, 116(3), 1771–1783. Y también: Bar-Ilan, J., & Halevi, G. (2019). "Retracted Research Articles from the RetractionWatch Database". ISSI 2019, pp. 322–328.